Should Emojis Be More Incorporated in Healthcare AI?

by Vince Hartman

Nov 13, 2025

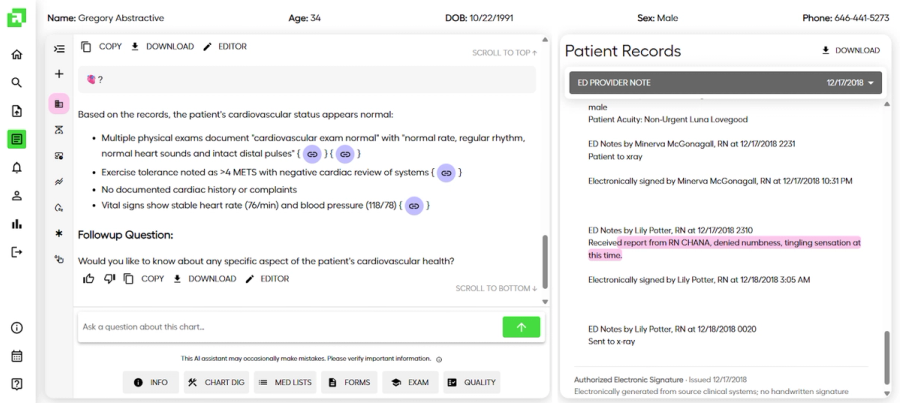

Languages evolve. Emojis have taken off with text messaging. And they can help us communicate our concepts, feelings, and emotions with fewer words. Healthcare is intertwined with language… and in the last decade, there has been a more concerted effort to “modernize” our healthcare lingo to better include emojis – research has shown they can help us communicate our pain scores as accurately as numeric scales in some settings (😖➡️🙂 scales) and speed up symptom check-ins/triage with quick taps (🤒, 🤕, 🤢). A great example is the work of Dr. Shuhan He (an EM physician and informaticist) who pushed for and co-authored the Unicode proposals that led to the anatomical heart and lungs emojis being approved in 2020.

Large language models are trained in a general corpus of language. And since the internet is prolific with emojis, it stands without reason that our AI is very familiar with these icons and how to use them “appropriately.” ChatGPT loves ⚠️ and 🛑 and often uses emojis to communicate concepts and or clarify tone.

When you input a query into an LLM, the text is broken into individual tokens. A single token can represent a whole word, but often represent ~1 - 4 (sometimes ~5 - 6) in common tokenizers. Tokens are the fundamental language of an LLM. Similarly, emojis are broken into tokens as well. For example, the 👨⚕️ is broken into subcomponent tokens of “man” + zero-width joiner (ZWJ) + “medical symbol”. As you can see, an emoji can contain a rich contextual mapping compacted within it (i.e. you are able to say what you mean to say in fewer words).

When an LLM “reasons”, it is calculating the mathematical context of all these tokens as related to each other. If you input a large amount of tokens, the contextual meaning between each pair and subpair is lost as the relationship becomes sparser. So in theory, using certain photos (or emojis) can create a richer understanding for the LLM with fewer tokens and better performance.

DeepSeek is currently exploring this underlying hypothesis by converting text/documents into images for “vision-text compression,” alongside standard image-text training. Their initial results show large token-count reductions with high decoding accuracy at moderate compression.

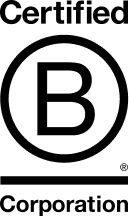

Likewise at Abstractive, we have seen early signals when interacting with our fine-tuned LLM that using emojis is as accurate as an answer with using fewer words. For example, if you ask our RAG system the question – 🫀?; you get the following response:

Based on the records, the patient's cardiovascular status appears normal: (1) Multiple physical exams document "cardiovascular exam normal" with "normal rate, regular rhythm, normal heart sounds and intact distal pulses", (2) Exercise tolerance noted as >4 METS with negative cardiac review of systems, (3) No documented cardiac history or complaints, (4) Vital signs show stable heart rate (76/min) and blood pressure (118/78)

Our team has tried emojis such as 🫀, 🫁, 🧠, and even the eggplant emoji 🍆– which focused on fertility treatments for the patient.

While we can see that emojis hypothetically may actually improve performance of LLMs, is healthcare willing to accept their incorporation as a means of improving patient care? If a large healthcare enterprise looked under the hood of the labeled pairs, few-shot learning examples, and communications of doctors with AI, and it had rich emojis included as a means to improve patient care – would healthcare be willing to accept the evolution of its own language? We think so at Abstractive. Just like the complex Greek and Latin terms of medical terminology, the everyday internet language of emoji has a place also.

At Abstractive Health, our mission is simple: empower clinicians with complete, accessible, and actionable patient data when they need it most. By delivering higher retrieval rates, requiring minimal inputs, providing faster access, and leading the field in clinically validated summarization, we’re helping clinicians make faster, safer, and more informed decisions on their patients.

Clinicians can sign up here and see our technology in action for free, with quick access to see it in action for the patients they care for.

Related Articles