Importance of Sentence Matching with AI Clinical Summarization

by Vince Hartman

Nov 18, 2025

When you summarize, you’re asking to be trusted. You’re deliberately excluding information, so how you reference the original sources becomes critical. During my early research and clinical interviews, I discovered that traditional reference citations - like what you find on Wikipedia or journal articles - are insufficient as the basis of trust to summarize a medical chart. It was my first real “aha” moment in this space.

Before we read a summary, we fall somewhere on a continuum from absolutely no knowledge of the content to super familiar. For example, if you read an AI summary transcript of a video call right after the meeting, you are able to peruse it pretty fast because you’re already familiar with the content - you lived the conversation. In that case, the process of “getting up to speed” is not a creation of a new cognitive mental map, you are simply reconstructing one you’ve already formed. But if the summary is your first exposure to new information, it doesn’t just compress knowledge — it creates your internal map of that content.

What makes full medical record AI summarization higher risk than many other AI tasks is that a doctor often has no baseline knowledge of the patient. Even if a summary has accuracy levels of 95%, the doctor has no cognitive map yet on how to discern and find the 5% inaccurate information in the summary. Even small inaccuracies are deeply concerning for the clinician. Going back to the video call example, if the voice transcription is at 95%, it’s easier to find the incorrect parts as you were part of that conversation.

And even though recent studies demonstrate that AI has higher baseline accuracy for producing medical summaries than a human doctor (such as an LLM producing the hospital course section of the discharge summary vs a resident), the manner in which an LLM is inaccurate is not logically consistent. It’s why the field has labeled inaccuracies as "hallucinations". The inaccuracies feel random and hard to predict unlike the more consistent mistakes doctors might make.

With this baseline, we discovered in 2021 before we even founded Abstractive Health, that traditional reference citations wouldn’t work as the core foundation to build trusted medical summaries. The baseline expectation of doctors for an AI medical record summary is 1:1 sentence-matching provenance where every sentence in the summary is linked to the exact sentence in the source document. Even 99% accuracy alone is insufficient (and reckless) to roll out to clinical users without deep sentence-level provenance.

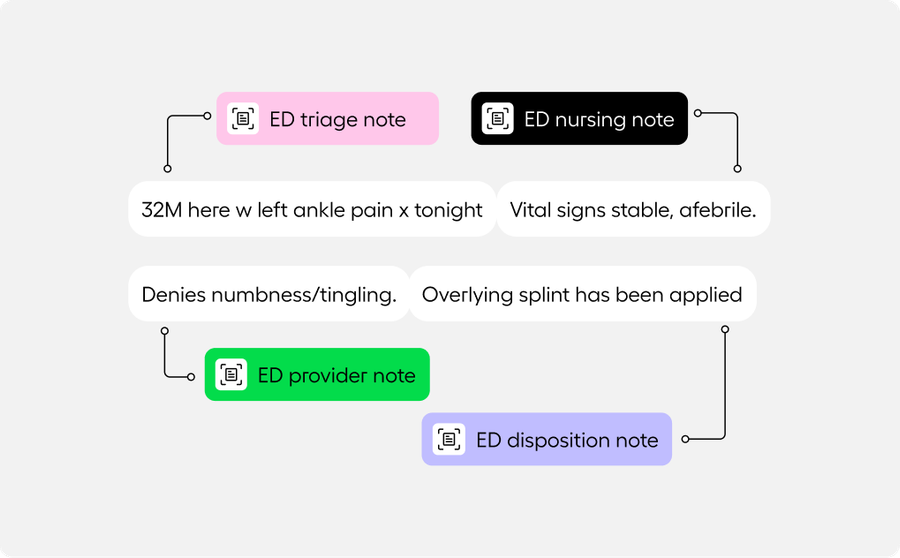

Sentence-level provenance goes a step further than traditional reference citations. Rather than sharing a reference only for important factual claims in the paragraph, it’s a system where every sentence has an explicit inspectable link to the source content. The UI treats the summary as the culmination of individual assertable facts that are deeply linked to the source documents and users have the ability to inspect any sentence as an individual claim and accept or reject the content. Traditional references work well for readers who mostly already trust the content and only check references to dive deeper. Clinicians, on the other hand, need the ability to inspect where every clinical information is coming from.

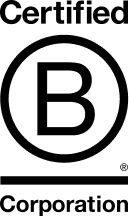

For example, a sentence like “He also hit his head on the wall and has an abrasion with swelling, no loc” should be clickable. When the clinician clicks it, they should jump straight to the ED note from that visit with that exact sentence highlighted.

If you have a 40-sentence summary, creating a small link icon next to every sentence will feel too busy, so you have to create a UI that is super intuitive that when you hover or click on the sentence, it quickly links to the content. Traditional reference linking benefits from a cleaner and familiar reading experience, but is not inherently designed for deep analysis and fact checking.

When you see only traditional reference linking on an AI medical summary, it usually means the system was designed by analogy to Wikipedia, not from first principles of how clinicians actually build trust. It assumes that an AI medical record summary is equivalent to every other summary in day-to-day life, but it inherently is not and requires tailored UI/UX.

Over the next year, as more and more AI summarization begins to roll out, you are going to hear clinicians complain about inaccuracies. In reality, the summaries may already be more accurate than humans, and what clinicians are really expressing is just that they can’t trust a summary that doesn’t have deep sentence-level provenance. And this isn’t an AI problem, it’s a software problem.

This is why our team has treated sentence-level provenance as a non-negotiable requirement from day one. At Abstractive Health, our mission is simple: empower clinicians with complete, accessible, and actionable patient data when they need it most. By delivering higher retrieval rates, requiring minimal inputs, providing faster access, and leading the field in clinically validated summarization, we’re helping clinicians make faster, safer, and more informed decisions on their patients.

Clinicians can sign up here and try our technology for free, with quick access to see it in action for the patients they care for.

Related Articles